Overview

Adaptive interruption handling allows an agent to respond naturally when users speak mid-response. Instead of relying on fixed timing or volume thresholds, the model analyzes the acoustic signals to identify intentional interruptions (barge-ins) from conversational backchanneling.

Backchanneling includes short listener cues such as "uh-huh," "okay," or "right" that indicate attention but don't require a response. By filtering these out, the agent avoids unnecessary turn switches caused by brief acknowledgments, incidental sounds, or background noise. The result is smoother, more natural interactions.

Adaptive interruption handling requires the latest Agents SDK versions:

- Python SDK v1.5.0 or greater

- Node.js SDK v1.2.0 or greater

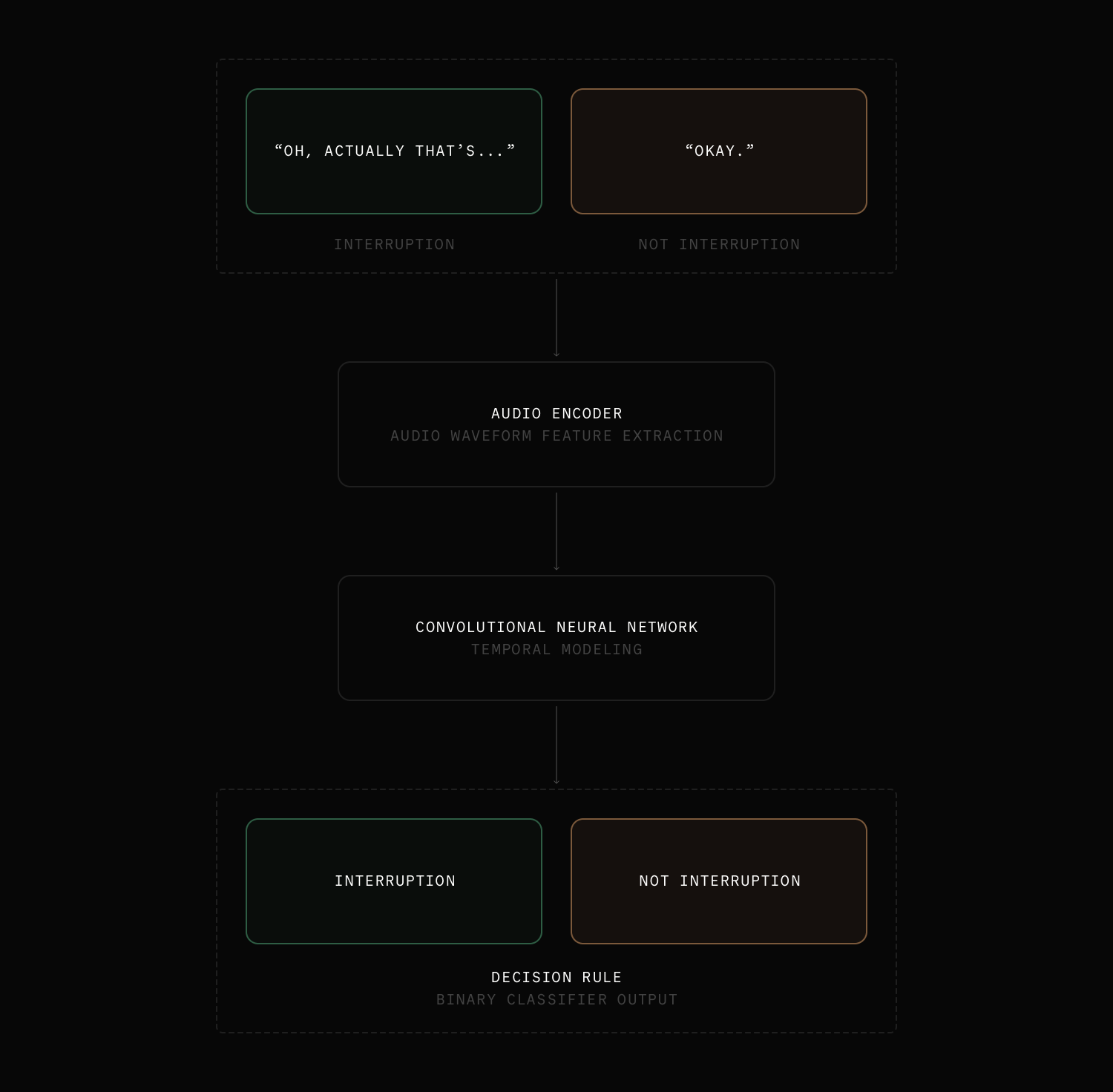

How it works

The adaptive interruption (barge-in) model is trained on real conversational audio to distinguish true interruption attempts from non-interruptive speech. It operates after voice activity detection (VAD) identifies incoming user audio.

Instead of immediately stopping the agent whenever speech is detected, the model analyzes the audio to determine whether the agent should actually yield the turn. Because the decision is based on acoustic signals rather than waiting for a transcript, the model can respond faster and reduce unnecessary interruptions.

The adaptive interruption model is meant to be used with any spoken language. It might perform better with English in some cases, but in most cases it works with any language.

How to use

Adaptive interruption handling is available in LiveKit Cloud and is enabled by default if the following conditions are met:

- Agent is deployed to LiveKit Cloud or running in dev mode.

- VAD is enabled.

- LLM is not a realtime model.

- STT model supports aligned transcripts.

Otherwise, the default behavior is to rely on VAD for interruption detection.

Adaptive interruption handling is available for unlimited usage to agents deployed to LiveKit Cloud. It's also available for local development with usage limitations.

You can also explicitly set the interruption mode to "adaptive" in the turn handling options:

session = AgentSession(# ... stt, llm, tts, vadturn_handling=TurnHandlingOptions(turn_detection=MultilingualModel(),interruption={"mode": "adaptive",},),)

const session = new voice.AgentSession({// ... stt, llm, tts, vadturnHandling: {turnDetection: new livekit.turnDetector.MultilingualModel(),interruption: {mode: 'adaptive',},},});

Audio samples

Compare the following audio samples to see how the agent responds to interruptions and non-interruptions with and without adaptive interruption handling.

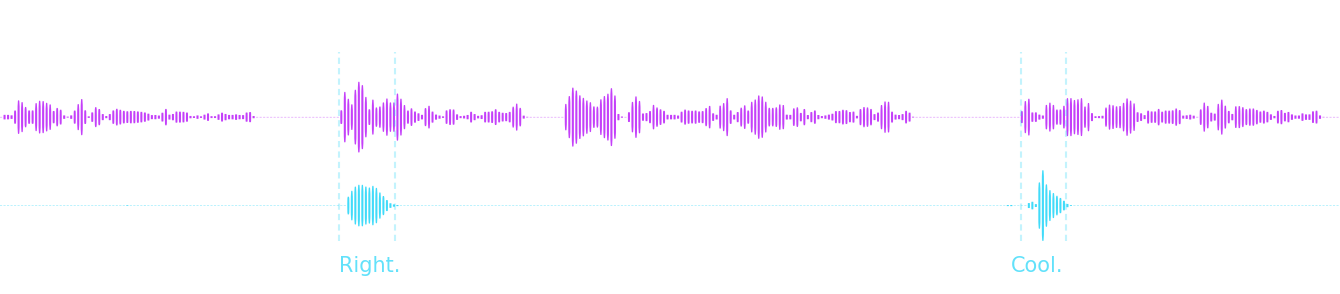

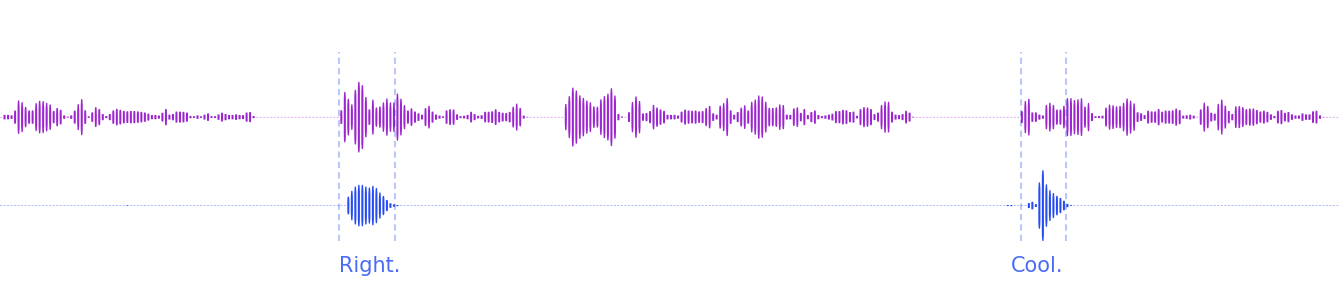

Using adaptive interruption handling

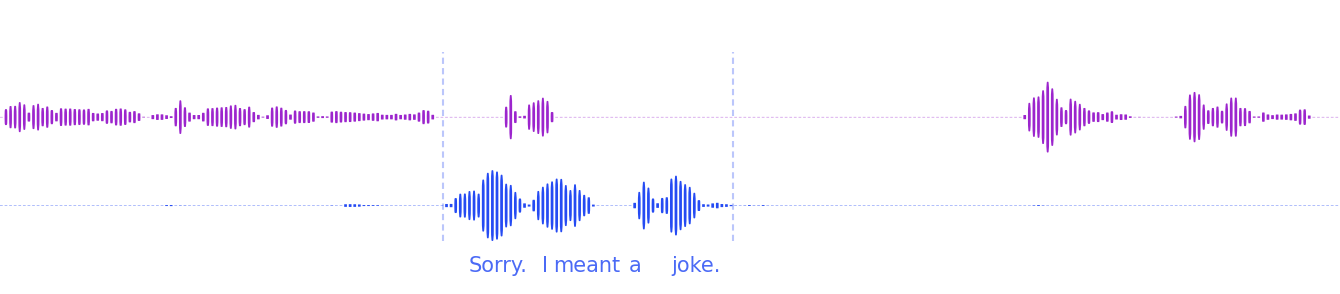

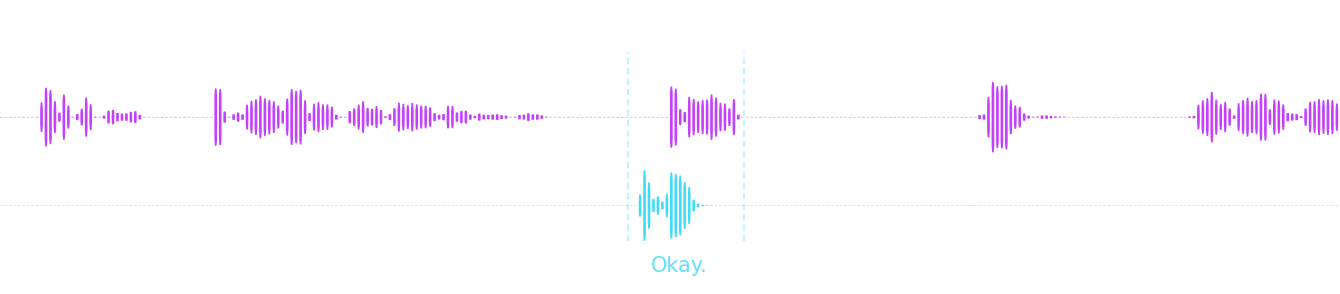

The following samples have adaptive interruption handling enabled and demonstrate how the agent ignores the speaker's brief acknowledgments signaling attention, but responds to genuine interruptions (barge-ins).

Backchannel (non-interruption)

Genuine interruption (barge-in)

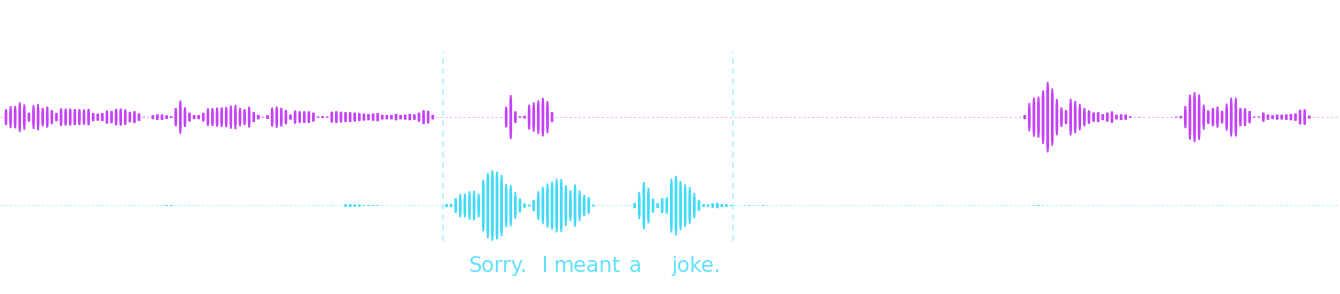

Without adaptive interruption handling

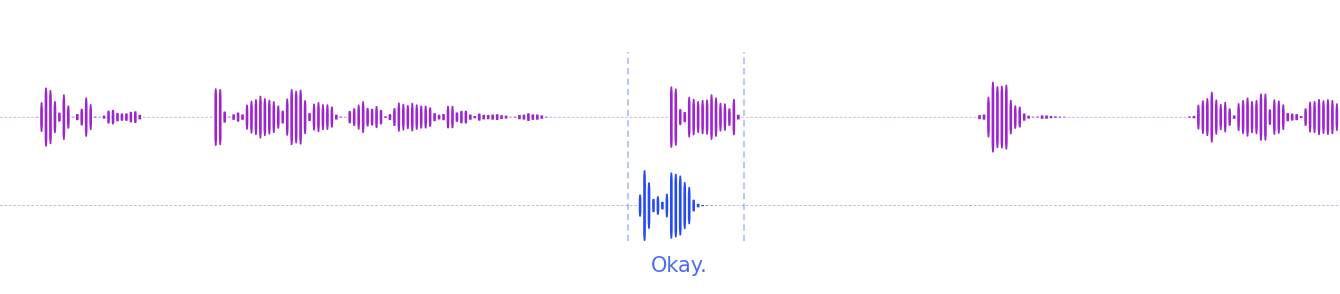

This sample uses VAD-only detection. Without adaptive interruption handling, the agent is interrupted by the speaker's brief acknowledgment of the agent's response.

VAD-only detection

Performance

The adaptive interruption model analyzes streaming audio chunks during overlapping speech to determine whether a detected speech segment is a true interruption.

The model runs on LiveKit Cloud's global inference infrastructure and adds minimal latency to the interruption pipeline. When agents are deployed to LiveKit Cloud, they run in the same data centers as the inference service, further reducing end-to-end latency.

Quota and limits

Adaptive interruption handling is included at no extra cost for all agents deployed to LiveKit Cloud.

For self-hosted agents, we include 40,000 inference requests per month across all plans - enough to experiment and develop locally without restrictions.

Aligned transcripts support

An aligned transcript includes timing information that allows you to synchronize the transcript with the audio. Each word or chunk of speech is assigned a start and end timestamp. Support for aligned transcripts is required for adaptive interruption handling. All STT models provided by LiveKit Inference support aligned transcripts.

For plugins, you can determine if the model supports aligned transcripts by checking the capabilities.aligned_transcript property:

if stt.capabilities.aligned_transcript:print("This STT model supports aligned transcripts.")

if (stt.capabilities.alignedTranscript) {console.log("This STT model supports aligned transcripts.");}

Metrics and usage

When adaptive interruption handling is enabled, an agent session collects interruption metrics for each barge-in detection, including per-event latency, and the number of requests and interruptions. You can listen to the metrics_collected event to get notified when new InterruptionMetrics are available.

Interruption model usage, including total requests per provider and model, is collected in ModelUsageCollector, available in session.usage.

To learn more, see Metrics and usage.

Turn off adaptive interruption handling

To use VAD-only interruption detection instead of adaptive handling, set the interruption mode to "vad" in the turn handling options:

turn_handling = {"interruption": {"mode": "vad",},}

const turnHandling = {interruption: {mode: 'vad',},};

To disable interruption handling entirely, set enabled to false in the interruption options. This means the agent cannot be interrupted by user speech.