Overview

The LiveKit Agent Builder lets you prototype and deploy simple voice agents through your browser, without writing any code. It's a great way to build a proof-of-concept, explore ideas, or stand up a working prototype quickly.

The Agent Builder produces best-practice Python code using the LiveKit Agents SDK, and deploys your agents directly to LiveKit Cloud. The result is an agent that is fully compatible with the rest of LiveKit Cloud, including LiveKit Inference, and agent insights, and agent dispatch. You can continue iterating your agent in the builder, or convert it to code at any time to refine its behavior using SDK-only features.

Access the Agent Builder by selecting Deploy new agent in your project's Agents dashboard .

After you deploy an agent to LiveKit Cloud, you can add it to a website with the hosted Agent Embed Widget.

Agent features

The following provides a short overview of the features available to agents built in the Agent Builder.

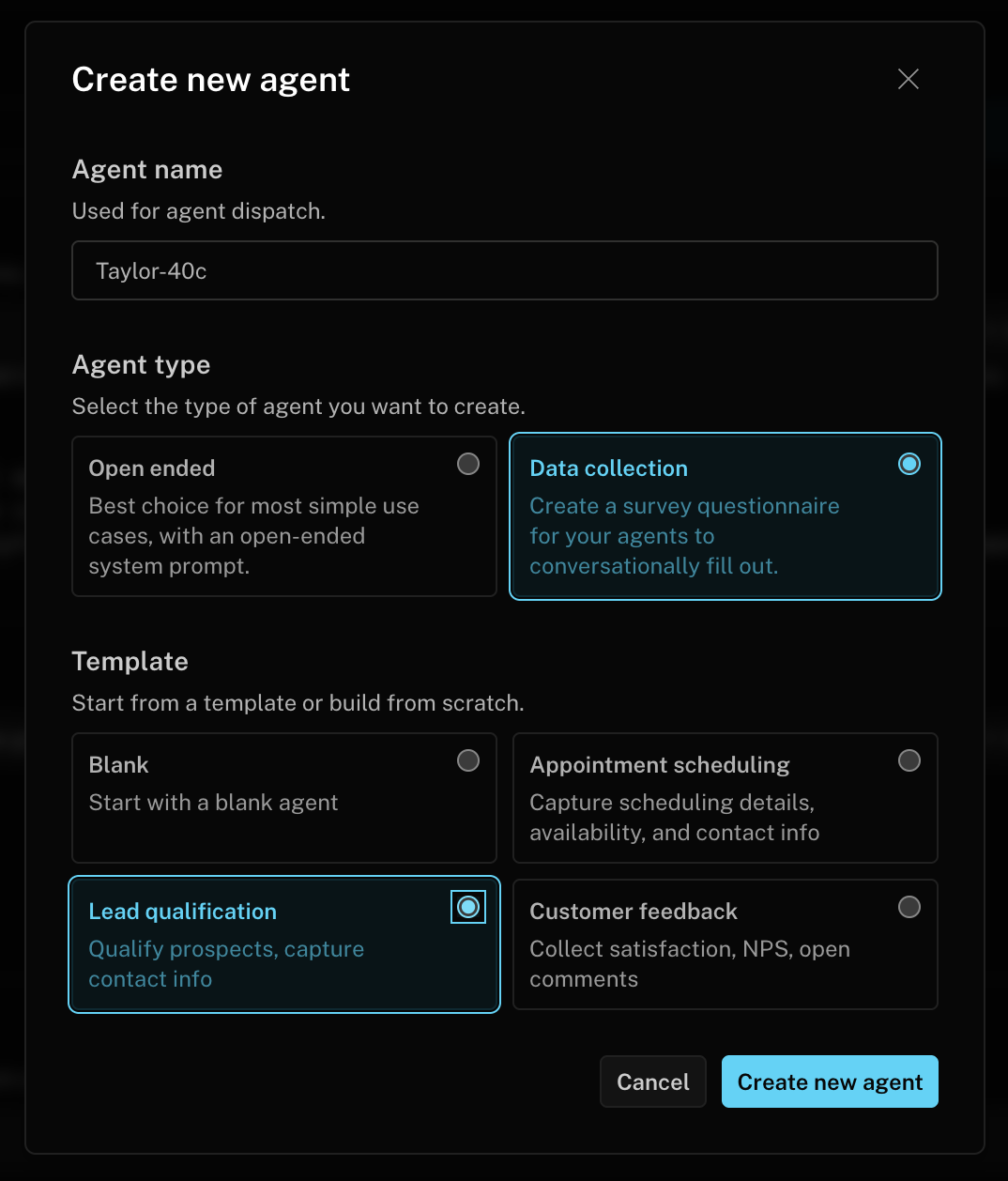

Agent name

The agent name is used for explicit agent dispatch. Be careful if you change the name after deploying your agent, as it may break existing dispatch rules and frontends.

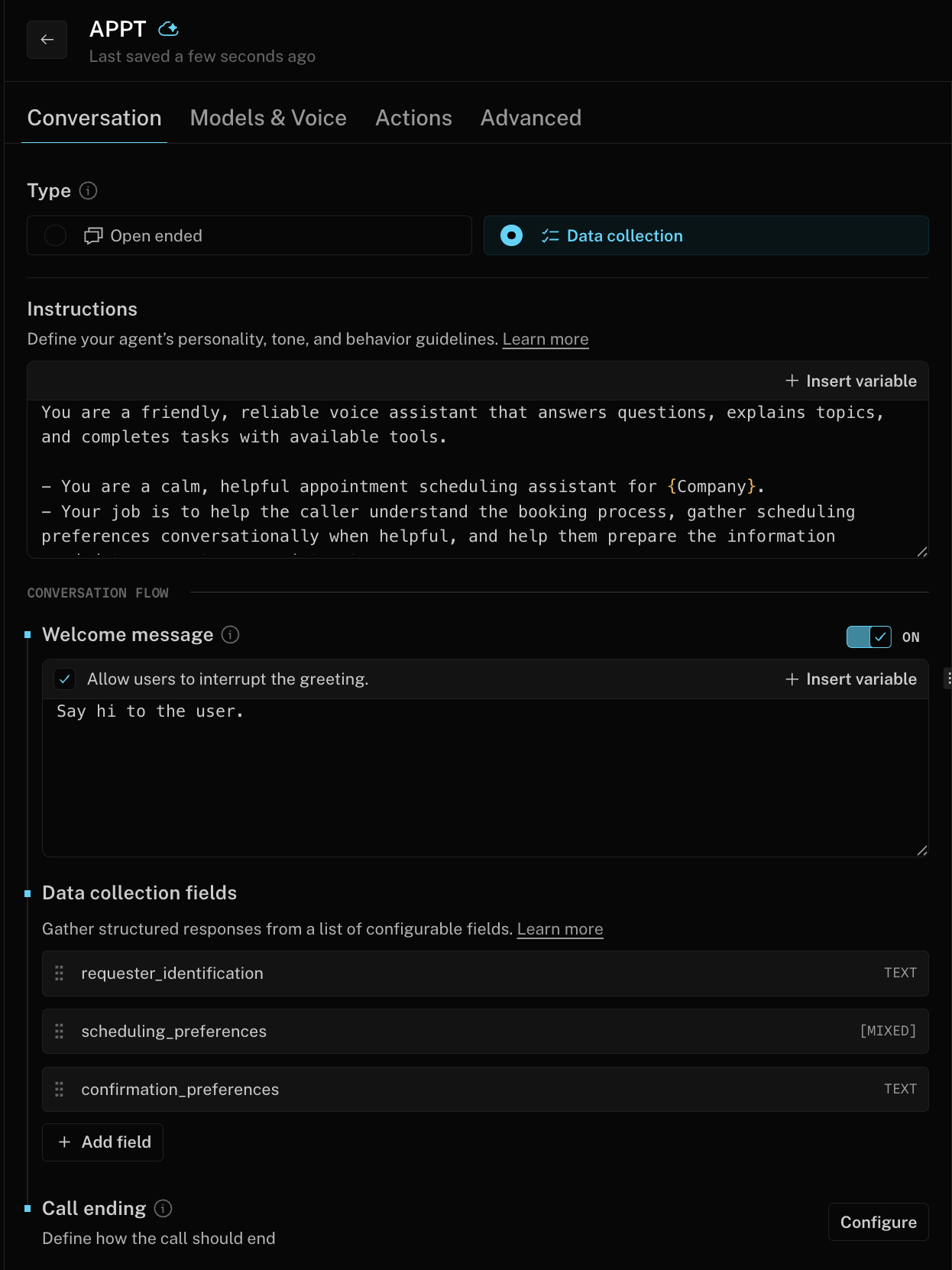

Instructions

This is the most important component of any agent. You can write a single prompt for your agent, to control its identity and behavior. See the prompting guide for tips on how to write a good prompt. You can use variables to include dynamic information in your prompt.

Data collection

Choose between an open-ended prompted conversation or data collection mode. In data collection mode, the agent extracts specific fields you define — such as names, preferences, or answers to questions — and returns them as structured results at the end of the call.

Data collection configuration includes:

- Fields: Define the data points your agent should collect. Each field has a name, a description that guides the LLM, and a type that the value must conform to (string, number, boolean, object, or list).

- Single or multiple answers: Fields can collect a single value or a list of values, depending on whether the caller may provide more than one answer.

- Required or optional: Mark fields as required to ensure the agent attempts to collect them before the call ends.

Collected results are sent to your configured summary endpoint at the end of the call. See Data collection mode results for the payload format.

Welcome greeting

You can choose if your agent should greet the user when they join the call, or not. If you choose to have the agent greet the user, you can also write custom instructions for the greeting. The greeting also supports variables for dynamic content.

Models

Your agents support most of the models available in LiveKit Inference to construct a high-performance STT-LLM-TTS pipeline. Consult the documentation on Speech-to-text, Large language models, and Text-to-speech for more details on supported models and voices.

Actions

Extend your agent's functionality with tools that allow your agent to interact with external systems and services. The Agent Builder supports three types of tools:

HTTP tools

HTTP tools call external APIs and services. HTTP tools support the following features:

- HTTP Method:

GET,POST,PUT,DELETE,PATCH - Endpoint URL: The endpoint to call, with optional path parameters using a colon prefix, for example

:user_id - Parameters: Query parameters (

GET) or JSON body (POST,PUT,DELETE,PATCH), with optional type and description. - Headers: Optional HTTP headers for authentication or other purposes, with support for secrets and metadata.

- Silent: When enabled, hides the tool call result from the agent and prevents the agent from generating a response. Useful for tools that perform actions without needing acknowledgment.

Client tools

Client tools connect your agent to client-side RPC methods to retrieve data or perform actions. This is useful when the data needed to fulfill a function call is only available at the frontend, or when you want to trigger actions or UI updates in a structured way. Client tools support the following features:

- Description: The tool's purpose, outcomes, usage instructions, and examples.

- Parameters: Arguments passed by the LLM when the tool is called, with optional type and description.

- Preview response: A sample response returned by the client, used to help the LLM understand the expected return format.

- Silent: When enabled, hides the tool call result from the agent and prevents the agent from generating a response. Useful for tools that perform actions without needing acknowledgment.

See the RPC documentation for more information on implementing client-side RPC methods.

Combining custom tools with Data Collection mode can bias the agent toward greedy tool execution — calling tools at the expense of natural conversation flow. To mitigate this, place any prompt that could trigger a tool call in the instructions for the specific field where it's relevant, rather than in the main agent instructions. This gives you fine-grained control over when and how tools are invoked.

MCP servers

Configure external Model Context Protocol (MCP) servers for your agent to connect and interact with. MCP servers expose tools that your agent can discover and use automatically, and support both streaming HTTP and SSE protocols. MCP servers support the following features:

- Server name: A human-readable name for this MCP server.

- URL: The endpoint URL of the MCP server.

- Headers: Optional HTTP headers for authentication or other purposes, with support for secrets and metadata.

See the tools documentation for more information on MCP integration.

Variables and metadata

Your agents automatically parse Job metadata as JSON and make the values available as variables in fields such as the instructions and welcome greeting. To add mock values for testing, and to add hints to the editor interface, define the metadata you intend to pass in the Advanced tab in the Agent Builder.

For instance, you can add a metadata field called user_name. When you dispatch the agent, include JSON {"user_name": "<value>"} in the metadata field, populated by your frontend app. The agent can access this value in instructions or greeting using {{metadata.user_name}}.

Secrets

Secrets are secure variables that can store sensitive information like API keys, database credentials, and authentication tokens. The Agent Builder uses the same secrets store as other LiveKit Cloud agents, and you can manage secrets in the same way.

Secrets are available as variables inside tool header values. For instance, if you have set a secret called ACCESS_TOKEN, then you can add a tool header with the name Authorization and value Bearer {{secrets.ACCESS_TOKEN}}.

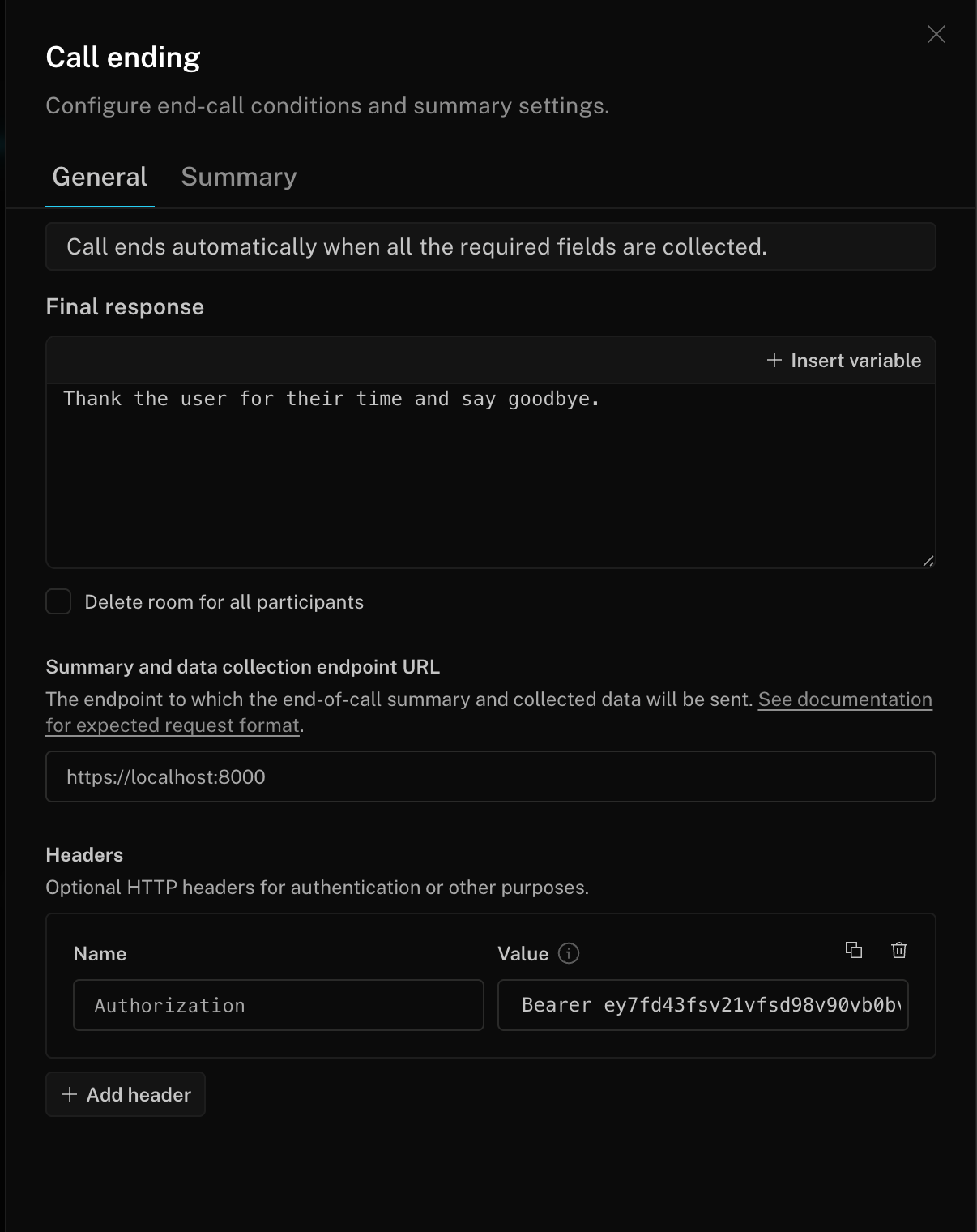

Call ending

Configure how each call wraps up, where the results are sent, and how the conversation is summarized.

When data collection is enabled, the call ends automatically once all required fields have been collected, so you don't need to wire up a custom end-call tool. The agent then optionally delivers a final spoken response, posts the collected results and an LLM-generated summary to your endpoint, and disconnects.

General settings

- Final response: A prompt the agent uses to deliver a closing message before the call ends, for example "Thank the user for their time and say goodbye." Supports template variables.

- Delete room for all participants: When enabled, the room is closed for everyone when the call ends, instead of just disconnecting the agent. Useful for one-on-one flows where the room shouldn't outlive the agent.

- Summary and data collection endpoint URL: The endpoint to which the end-of-call summary and collected data are sent via HTTP POST. See Endpoint payload below for the request format.

- Headers: Optional HTTP headers for authentication or other purposes, with support for secrets and metadata.

Summary settings

When enabled, the agent automatically generates a summary of the conversation using the selected LLM and includes it in the request to the configured endpoint.

- Large language model (LLM): The language model used to generate the end-of-call summary.

- Thinking effort: Controls how much reasoning effort the model uses. Only available for reasoning models such as GPT-5 and GPT-5.1.

- Summary instructions: Custom instructions for how to generate the summary. Supports template variables such as

{{metadata.key}}and{{secrets.key}}. Leave empty to use the default summary format.

Endpoint payload

The endpoint receives an HTTP POST request with the following JSON body:

| Field | Type | Description |

|---|---|---|

job_id | string | The unique identifier for the agent job. |

room_id | string | The unique identifier for the room. |

room | string | The room name. |

started_at | string | ISO 8601 timestamp of when the session started. |

ended_at | string | ISO 8601 timestamp of when the session ended. |

summary | string | The generated call summary text (optional). |

results | object | Data collection mode results (optional). |

Preview summary

You can preview summaries during a live test call by clicking the Generate summary button in the preview panel. This uses the current call summary configuration to generate a summary from the conversation so far, without ending the call.

Data collection mode results

When your agent uses data collection mode, the results are sent to the same summary endpoint URL. The POST body includes:

resultsalways present in data collection mode. Contains the data your fields captured.summarypresent when call summary generation is enabled in Call ending options.

The results object contains fields defined in your data collection configuration. Each field is either a single object (for single-answer fields) or an array of objects (for fields that collect multiple answers):

{"method": "POST","url": "<your configured endpoint URL>","path": "<your configured endpoint URL path>","query": {},"headers": {"accept": "*/*","accept-encoding": "gzip, deflate","content-length": "<content length>","content-type": "application/json","host": "<your configured endpoint URL base>","user-agent": "Python/3.13 aiohttp/3.13.3"},"body": {"job_id": "<job_id>","room_id": "<room_id>","room": "<room>","started_at": "<timestamp>","ended_at": "<timestamp>","summary": "<the selected LLM-generated summary string>","results": {"<field name (single answer)>": {"<result name>": "<value string/bool/number>",...},"<field name (answers list)>": [{"<result name>": "<value string/bool/number>",...},...]}}}

Other features

Your agent is built to use the following features, which are recommended for all voice agents built with LiveKit:

- Background voice cancellation to improve agent comprehension and reduce false interruptions.

- Preemptive generation to improve agent responsiveness and reduce latency.

- LiveKit turn detector for best-in-class conversational behavior.

Agent preview

The Agent Builder includes a live preview mode to talk to your agent as you work on it. This is a great way to quickly test your agent's behavior and iterate on your prompt or try different models and voices. Changes made in the builder are automatically applied to the preview agent.

Sessions with the preview agent use your own project's LiveKit Inference credits, but do not otherwise count against LiveKit Cloud usage. They also do not appear in Agent observability for your project.

Deploying to production

To deploy your agent to production, click the Deploy agent button in the top right corner of the builder. Your agent is now deployed just like any other LiveKit Cloud agent. See the guides on custom frontends and telephony integrations for more information on how to connect your agent to your users.

Test in Console

After your agent is deployed to production, test it in Agent Console by clicking Launch Console in the top right corner of the builder.

To build your own frontend for development and testing, use the project token server or follow the custom frontends guide.

Observing production sessions

After deploying your agent, you can observe production sessions in the Agent insights tab in your project's sessions dashboard .

Convert to code

At any time, you can convert your agent to code by choosing the Download code button in the top right corner of the builder. This downloads a ZIP file containing a complete Python agent project, ready to deploy with the LiveKit CLI. Once you have deployed the new agent, you should delete the old agent in the builder so it stops receiving requests.

The generated project includes a README and an AGENTS.md file with best practices and integration with the LiveKit CLI and Docs MCP server so coding agents like Claude Code and Cursor can build with LiveKit expertise.

Limitations

The Agent Builder is not intended to replace the LiveKit Agents SDK, but instead to make it easier to get started with voice agents which can be extended with custom code later after a proof-of-concept. The following are some of the agents SDK features that are not currently supported in the builder:

- Workflows, including handoffs, and tasks

- Virtual avatars

- Vision

- Realtime models and model plugins

- Tests

Billing and limits

The Agent Builder is subject to the same quotas and limits as any other agent deployed to LiveKit Cloud. There is no additional cost to use the Agent Builder.